At the Interconnect Day 2019, Intel’s primary focus was the company’s new processor-to-processor interconnect, called the Compute Express Link (CXL). This is similar to AMD’s Infinity Fabric technology which is used in both CPUs and GPUs, i.e, Vega and Zen. However, the core difference here is that Intel’s CXL is aimed at replacing the aging PCIe interface.

Meet Intel CXL: Intel’s PCIe 5 Alternative

Although Intel’s CXL will work over the PCIe layer for universal compatibility – it will not utilize the PCIe bus and instead act as an alternative for better scaling and efficiency. The first iteration of CXL will have support for PCIe Gen5 and that means it’ll most likely come after Intel’s discrete graphics cards are announced.

Just like AMD’s Infinity Fabric, CXL is aimed at connecting together multiple CPUs (or accelerators) to work as a coherent unit. The former was used to connect an AMD Radeon RX Vega GPU to an Intel CPU in the Kaby Lake G processors, so there’s a very good chance that it will also be used to run multiple GPUs in parallel at some point in the future.

One of the core problems with SLI and XFX is that today’s graphics APIs like DirectX and Vulkan “see” only one GPU. So to circumvent this little problem, drivers come in between and using a bunch of clever hacks such as alternate-frame rendering or split-frame rendering make it so that one GPU does half of the work while the other does the rest, but the API or the application only sees one GPU.

However, this isn’t that easy as many engines just don’t support AFR without major modifications and even then there are various issues such as temporal filtering and AA that aren’t compatible with it. Furthermore, synchronizing the two GPUs is a big headache and it needs to be done separately for every game engine, and at times it’s just not possible for one reason or another.

The high latency in multi-GPU setups is also present because of improper syncing and the slow inter-chip connections. This also forces the vendors to mirror the textures on the two cards, cutting the total memory pool in half. In short, as WCCF points it out, they didn’t work as one coherent unit but as multiple that keep running into syncing issues.

Intel CXL: Three Sub-protocols

Intel’s CXL comes with three dynamically multiplexed sub-protocols on a single layer:

- CXL.io: This allows access to registers, interrupts, etc across all the connected chips.

- CXL.cache: This allows the device access to other processors’ memory.

- CXL.memory: Lets the processor access device attached memory.

The first one is more about coherence through shared registers and interrupts for better OS support. The other two, however, should allow GPUs to share cache and VRAM with each other, leading to pooling of the memory, and rather than mirroring the same data on all the attached GPUs, this should double or triple the VRAM depending upon the number of cards connected.

CXL.cache and memory are aiming for lower latency by using a separate transaction layer from I/O. This should solve the mini-stutters and higher lows (FPS) that come with SLI and XFX. Intel’s CXL also has this thing called, “coherence bias” that lets the device engine access its memory without accessing the processor, further reducing the latency.

Lastly, CXL coherency protocol is also asymmetric, meaning it’s compatible with non-Intel processors and can be used across various

Note: Intel is planning to connect accelerators with CPUs using CXL but the same can also be very well utilized with graphics cards.

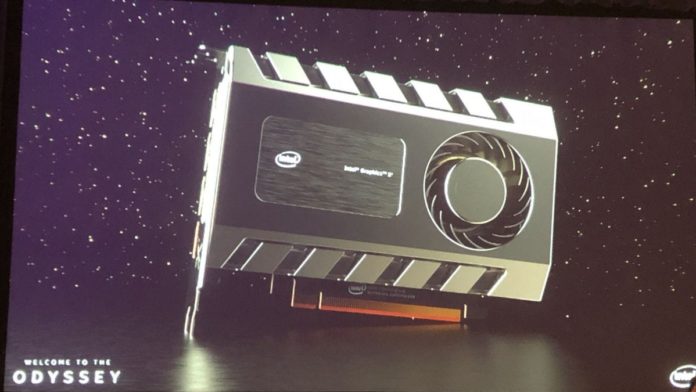

Intel Xe and CXL

It’s almost certain that Intel will leverage the CXL technology to build much faster flagship products that will use multiple GPUs. At a time when NVIDIA and AMD provide the bare-minimum support for multi-GPU configs, this will seriously shake things up, allowing a sudden sprout in the visual capabilities of modern games as well as other 3D applications. We expect NVIDIA and AMD to adopt similar designs to keep up with Intel’s new-found ambition.

Read more:

- NVIDIA GeForce GTX 1650 Zotac Card Leaked; Launch Imminent

- Unnamed NVIDIA GPU Spotted on UserBenchmark, Performance between GeForce RTX 2070 and RTX 2080

- Devil May Cry 5: 4K Screenshots to Satisfy the Demon Hunter in You

- AMD’s CPU Share in Germany Ryzes to 69%; Intel Down to 32%

Source: WccFTech